Collecting network traffic, ØMQ and packetbeat

As part of running infrastructure it might make sense or be required to store logs of transactions. A good way might be to capture the raw unmodified network traffic. For our GSM backend this is what we (have) to do and I wrote a client that is using libpcap to capture data and sends it to a central server for storing the trace. The system is rather simple and in production at various customers. The benefit of having a central server is having access to a lot of storage without granting too many systems and users access, central log rotation and compression, an easy way to grab all relevant traces and many more.

Recently the topic of doing real-time processing of captured data came up. I wanted to add some kind of side-channel that distributes data to interested clients before writing it to the disk. E.g. one might analyze a RTP audio flow for packet loss, jitter, without actually storing the personal conversation.

I didn’t create a custom protocol but decided to try ØMQ (Zeromq). It has many built-in strategies (publish / subscribe, round robin routing, pipeline, request / reply, proxying, …) for connecting distributed system. The framework abstracts DNS resolving, connect, re-connect and exposes very easy to build the standard message exchange patterns. I opted for the publish / subscribe pattern because the collector server (acting as publisher) does not care if anyone is consuming the events or data. The message I sent are quite simple as well. There are two kind of multi-part messages, one for events and one for data. A subscriber is able to easily filter for events or data and filter for a specific capture source.

The support for Zeromq was added in two commits. The first one adds basic zeromq context/socket support and configuration and the second adds sending out the events and data in a fire and forget manner. And in a simple test set-up it seems to work just fine.

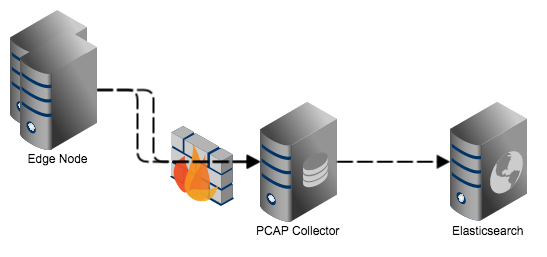

Since moving to Amsterdam I try to attend more meetups. Recently I went to talk at the local Elasticsearch group and found out about packetbeat. It is program written in Go that is using a PCAP library to capture network traffic, has protocol decoders written in go to make IP re-assembly and decoding and will upload the extracted information to an instance of Elasticsearch. In principle it is somewhere between my PCAP system and a distributed wireshark (without the same amount of protocol decoders). In our network we wouldn’t want the edge systems to directly talk to the Elasticsearch system and I wouldn’t want to run decoders as root (or at least with extended capabilities).

As an exercise to learn a bit more about the Go language I tried to modify packetbeat to consume trace data from my new data interface. The result can be found here and I do understand (though I am still hooked on Smalltalk/Pharo) why a lot of people like Go. The built-in fetching of dependencies from github is very neat, the module and interface/implementation approach is easy to comprehend and powerful.

The result of my work allows something like in the picture below. First we centralize traffic capturing at the pcap collector and then have packetbeat pick-up data, decode and forward for analysis into Elasticsearch. Let’s see if upstream is merging my changes.