Cisco probeless monitoring protocol

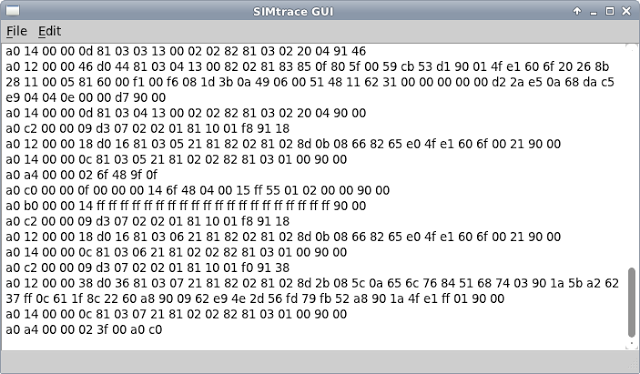

The Cisco probeless monitoring protocol (pmp) is a proprietary protocol used by the Cisco ITP. This protocol is used to forward M3UA/MTPL3 messages to another server. The data is being sent on port 33500 using UDP. Previously I used okteta to study the file format and this time I used Pages and HexFiend. To understand the basic structure one needs to start somewhere. The first assumption for a telco protocol is DER or TLV encoded data. In wireshark one could…